How The New Yorker’s augmented reality feature gave voices to ordinary items

Augmented reality is set to transform media as we move into a more networked society. News organizations are continually shifting into digital formats where AR can enhance coverage and offers new opportunities for in-depth reporting.

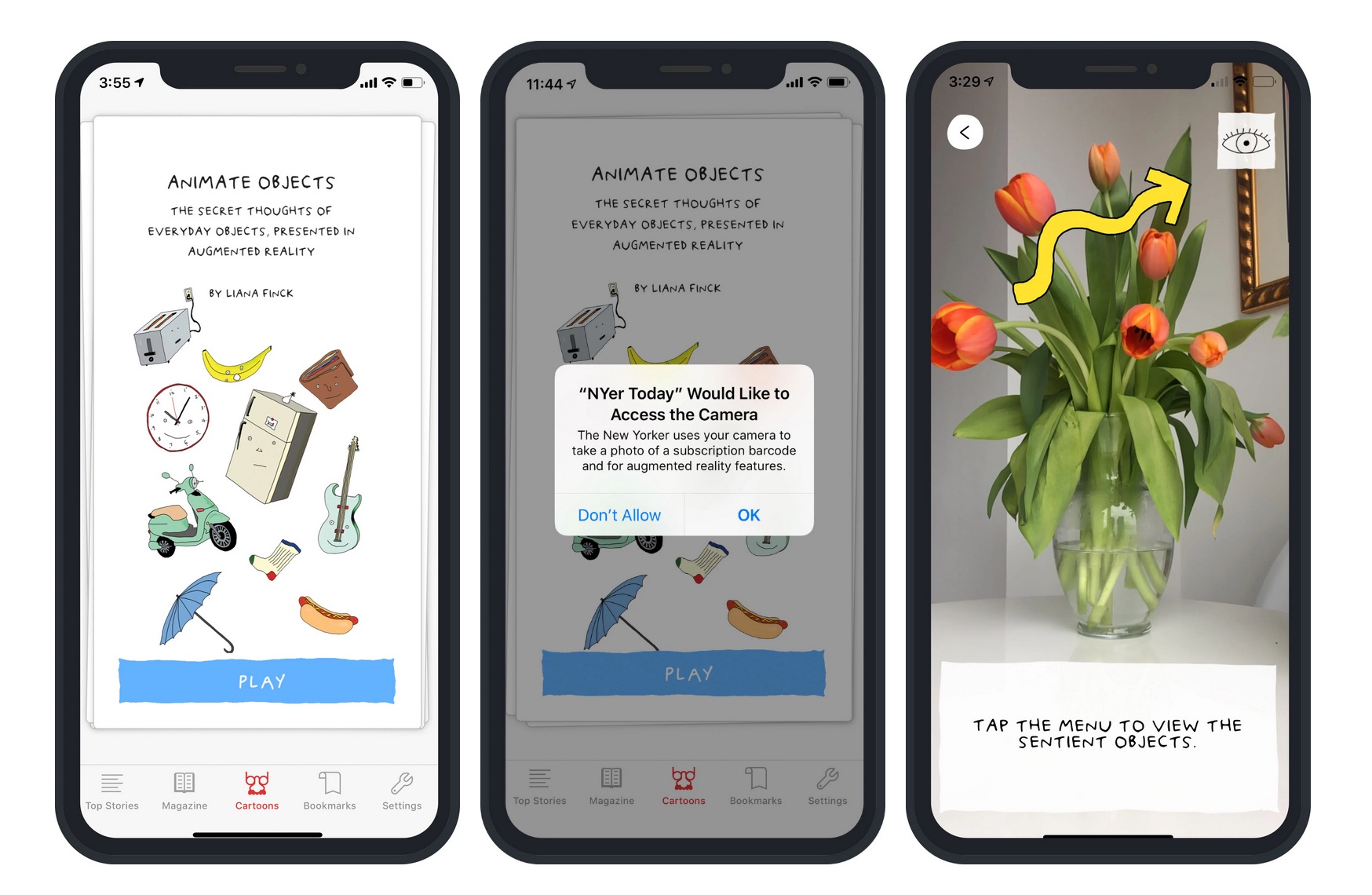

Last November, The New Yorker Today app introduced an AR feature, “Animate Objects.” It brings pre-selected inanimate objects – a menu of everyday things that users might have in their own lives and environment – to life through magazine cartoonist Liana Finck’s “photoons.” With AR applied, the objects are given funky personalities and even voices with witty jokes recorded by popular comedians. To have an integrated AR experience, users open the app and hover their phone over certain kinds of objects to trigger Finck’s annotations as well as narration, including the voices of comedians. As the app team explains: “This feature uses object detection to recognize items in your environment.”

With such AR features, readers are able to experience the news by participating in a story rather than just reading or watching it. Storybench spoke with Monica Racic, the director of production and multimedia at the New Yorker, about Animate Objects, exploring storytelling through AR and its future in the world of journalism.

How did the idea to develop the Animate Objects project come to mind?

In 2016 we produced an augmented reality cover with one of our artists, Christoph Niemann. That was the first time I worked on an augmented reality feature and it was quite exciting and we were so pleased at how that project went. However, it required users to download a separate app. So the next time, we had a few goals in mind when we cooked up the idea for Animate Objects.

The first one was to integrate ARkit into our app (it is a native iOS app) so that we can start producing AR features and there weren’t any barriers to entry for people who wanted to experience the feature. They can do it right within our own app. We also wanted to create an experience that encourages people to come back over time. A lot of the augmented reality features I was seeing at the time were mostly one-trick ponies. You opened it up, you looked at it and said, “Oh, that’s cool!” and never looked at it again. I wanted to make something that encouraged you to come back, that had an episodic quality to it. And I also really wanted to make a feature that could only be told in AR – it required your immediate surroundings in order to work.

We also wanted to innovate and use the latest technology in object detection, machine learning, spatial audio. All of that was factored into the final project. So we decided we wanted to do something with the cartoonist Liana Finck who is one of our regular contributors. She experimented previously with the idea of what she called “photoons.” She would draw over the photography that she had taken, and we just felt that her hyperaware sense of humor was a natural fit for a story that was all about looking at the world around you with a tilt of the head. That’s how we created this dream team of producers to create Animate Objects.

How long did the project take, and how many people were involved?

There was a whole team of people. From ideation to publication, it probably took about a year but we were also working on several other projects during that time. It’s about 10 people who worked in creating the experience and were involved in producing, aside from the humorists (in the experience there are audio jokes, so there were other performers and comedians involved).

How technologically challenging was the task?

This was a very interesting project. We were dealing with a lot of variables, such as object detection. It requires the app to not only properly recognize and categorize objects it’s seeing, but depending on how the user is holding their camera, the vantage point they’re standing from or the lighting, it might cause false positives or negatives. There was a lot of technical testing involved in building the project. It was quite a challenge I would say.

Was it hard to decide on the selection of objects?

We really wanted to include items that most people would have in their immediate proximity when they first enter the experience, like a wallet or a pen. But we also wanted a nice variety of objects that required people to seek them out, like a shopping cart or a banana. In that way, there is a bit of a scavenger hunt quality to it, and there are also “easter eggs” we hid within the experience that you discover, if you find those objects in the real world.

Do you plan to improve the variety of objects?

I think for now this is what we wanted to put out to the world. As I’ve said before, AR is a very interesting space, so who knows? We might be contributing more to it in the future.

What would you say was the primary goal of the new AR feature? Was it more targeted toward current subscribers or a potential new audience?

The primary goal with this feature is probably the same as with any other feature we produce, which is to tell a great story in a way that hopefully lives up to what our audience has come to expect from us. And if we do it well, then certainly the hope is to attract a new audience toward what we do and hopefully, they subscribe. I think this feature tapped into our devoted audience that loves our humor pieces and cartoons, but it also services people who are looking to experience innovative forms of storytelling. Producing a humor feature in augmented reality and getting all these physical aspects right for the visual and audio queues is quite challenging. I am happy to say we succeeded in that regard and that was echoed in the reception we got.

What was the response like from your audience’s perspective?

On social and elsewhere we saw people discovering the objects and reacting to (and appreciating) the jokes. From our analytics, we can see the AR feature encouraged repeat visitation, so people went back to the feature, which is really encouraging and the user behavior mirrors the highly engaged interaction with our content that we’re seeing across the New Yorker Today app. That was really nice to see.

Would you consider the feature successful now (after several months from launching it)? And how would you define “success” as Animate Objects is neither a traditional news story nor a digitally-enhanced investigation?

Yes. To set an editorial and a business objective, that would be to tell a great story hopefully using innovative technology in a way that extends the rich storytelling tradition of the magazine, and also attracts new subscribers and gets the word out about what we do.

In your previous interviews, I’ve read that AR excites you as you believe it’s a great enhancement to the traditional storytelling experience. What other projects do you have in mind going forward?

I think AR is really the next frontier for personalization. Visual storytellers have an opportunity to make a story immediately relevant to a person’s surroundings. There are a lot of exciting possibilities that come with that, and it’s definitely something we are thinking about. This is just a very good step in that direction.

Where do you see the future of AR as it relates to journalism?

I think it’s the opportunity for people to personalize stories, make it relevant to readers and lend that kind of immediacy. So I think it’s going to be another tool in the toolkit for journalists over the next several years.

Do you see reporters and writers around you interested in exploring AR or do they prefer other digital tools and techniques?

I think as with any new medium, once the adoption rate increases and more and more people are able to easily experience those mediums from their devices, it becomes so ubiquitous — or better to say “accessible,” in a way that there are a lot of opportunities to use it within the larger framework of journalistic storytelling. As adoption increases, I think you’ll see more and more journalists willing to use AR.